That's why we use Politifact to scrape our "FAKE-NEWS DATASET".Įven though there are challenges too in a labelling news article but we will be going to cover up that in a further section. So this altered text on model training in ML will give us a biased result every time and the model that we made using this kind of dataset will result into a dumb one which can only predict news articles having keywords like "FAKE", "DID?", "IS?" in it and will not be going to perform well on a new testing set of data. So for example, If you go through the link "BoomLive.in", you will find that the news articles specifying "FAKE" are not in its actual form and altered on basis of some analysis of the fact-checking team.

We want to extract a raw news article without any keywords specifying whether the given news article in a dataset is "FAKE" or not. I go through these news websites to get my FAKE-NEWS Datasetīut honestly speaking, I end up scraping data from one website i.e., Politifact.Īnd there is a strong reason to do so, As you go through the listed links up there, you will conclude that we needed a dataset with already labeled category i.e., " FAKE" but also we don't want our news articles to be in a modified form as such. My Project was basically based on classifying different news articles into two main categories FAKE & REAL.įor this project, The first task was to get a dataset which is already labeled with " FAKE", so this can be achieved by scraping data from some verified & certified news websites, on which we can rely on for fact of news articles and it is really a very difficult task to get genuine " FAKE NEWS".

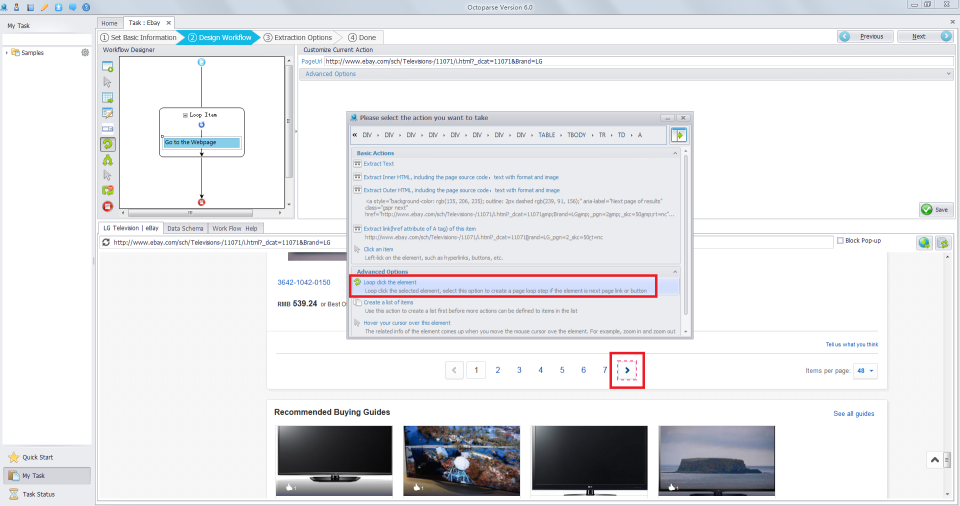

And that's how I did my project from the scratch. So this motivated me to make my own Dataset for my project accordingly. I was needed with a dataset that I couldn't able to find anywhere according to my need. While there are many datasets that you can find online with varied information, sometimes you wish to extract data on your own and begin your own investigation. Whenever we begin a machine learning project, the first thing that we need is a dataset. So, I get motivated to do web scraping while working on my Machine-Learning project on Fake News Detection System. Web Scraping is a technique employed to extract large amounts of data from websites whereby the data is extracted and saved to a local file in your computer or to a database in table (spreadsheet) format. However, we can limit ourselves to collect a large amounts of information from a single source and use it as a dataset. Generally, web scraping involves accessing numerous websites and collecting data from them. Step-10: Making csv file & saving it to your machineĪim of this article is to scrape news articles from different websites using Python. 1.2.1 A brief introduction to webpage design and HTMLġ.2.2 Web-scraping using BeautifulSoup in PYTHON

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed